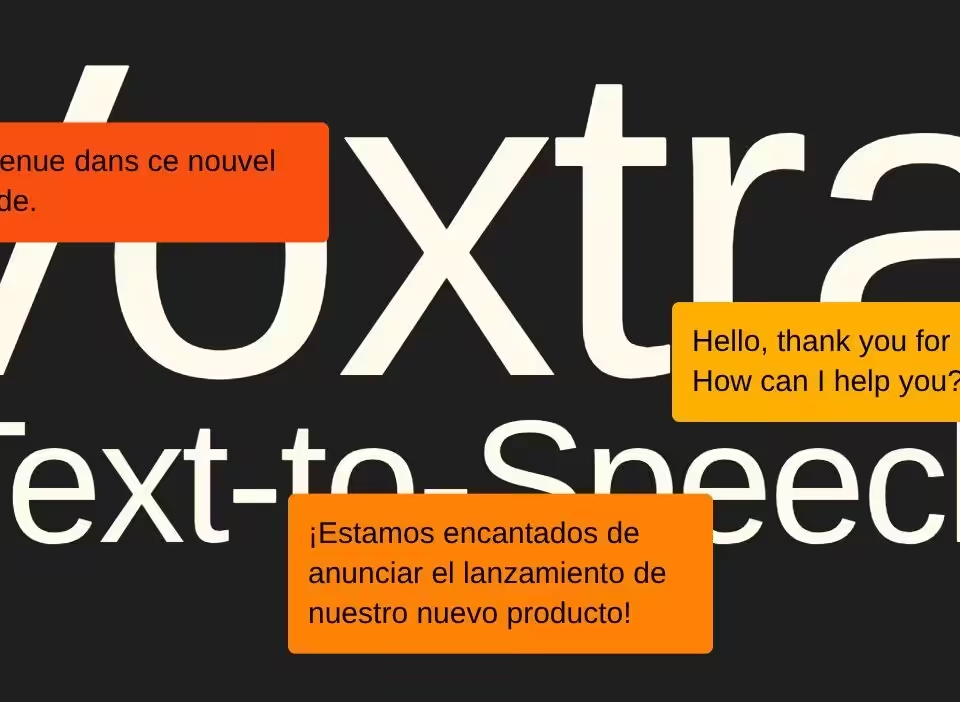

Mistral AI launches Voxtral Transcribe 2 with real time speech recognition at low cost

March 27, 2026

CNN starts building its own AI agents to handle future media deals

April 6, 2026Wikipedia just drew a firm line against AI generated content in its massive online encyclopedia. Volunteer editors voted overwhelmingly to ban the use of large language models for creating or rewriting articles because such text often breaks core rules on accuracy and sourcing. The English edition alone holds more than 7.1 million articles that now stay firmly in human hands for substantive work. 📖

What the new policy actually says

The updated guideline states that text generated by LLMs frequently violates Wikipedia principles around verifiability and original research. As a result, the use of tools such as ChatGPT or Google Gemini to produce or overhaul article content is now prohibited. The policy emerged from a request for comment process that closed on March 20 2026 with a vote of roughly 44 to 2 in favor.

Two clear exceptions exist. Editors may still rely on AI for translations from other language Wikipedias. They can also use models to suggest basic copy edits to their own writing, but only after careful human review and only if the AI adds no new facts or meaning. The policy warns that LLMs can quietly alter the sense of a sentence so that it no longer matches the cited sources.

This shift builds on earlier steps. In 2025 the community introduced speedy deletion for suspected AI slop pages, and a dedicated WikiProject AI Cleanup formed to hunt down problematic entries. Details appear in the official page on writing articles with large language models.

Why editors pushed for the ban

Human volunteers grew tired of spending hours fixing hallucinations and fake references that AI tools insert in seconds. The asymmetry of effort created real strain on the editor base. Jimmy Wales, the site founder, had earlier described AI output as a mess and noted that current models still fall short for serious encyclopedia work. Reports from 404 Media and Slate captured the community frustration that finally tipped the vote.

Similar restrictions surfaced on the German Wikipedia earlier in 2026, showing the issue spans language versions. Broader coverage in The Verge and TechCrunch highlighted how the policy targets the root problem while leaving room for helpful but limited AI assistance.

What this means for the wider web

Wikipedia has long stood as one of the last major holdouts against automated content floods. By keeping core writing human, the site protects its reputation for reliability at a time when AI slop spreads across search results and social platforms. For those interested in related tools or discussions, check AI knowledge base platforms, wiki focused AI assistants, content moderation solutions or fact checking AI systems.

The decision leaves open the question of future model improvements. Wales once said he would not rule out AI entirely in the long term, yet right now the community has chosen caution over convenience.

Will other volunteer driven knowledge projects follow Wikipedia lead, or does the ban simply push AI content creation elsewhere on the internet?