OpenAI’s ChatGPT Images 2.0 brings multilingual text and reasoning capabilities to AI image generation

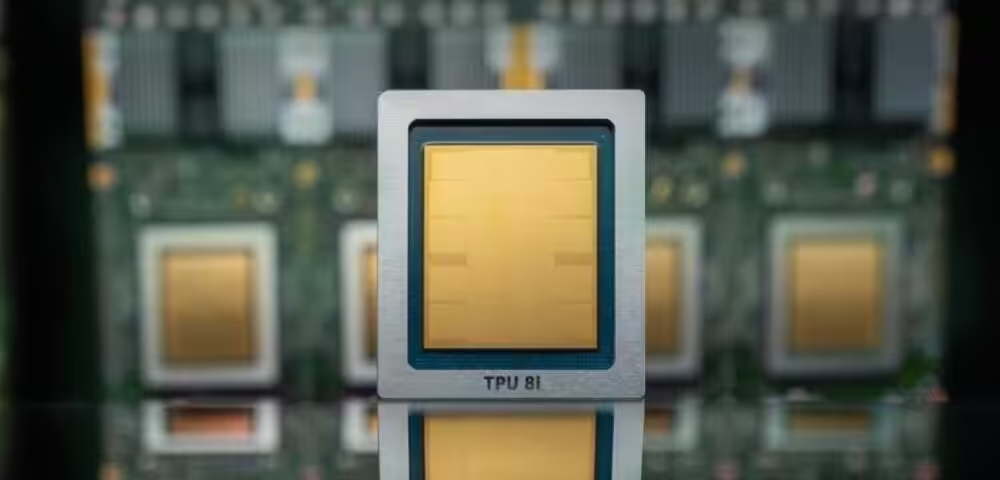

April 22, 2026Google Cloud is making another bid to chip away at Nvidia’s dominance in AI computing. The company announced its eighth generation of custom AI chips, splitting them into two specialized variants for the first time.

The move reflects the maturing AI market, where companies are starting to separate training workloads from inference – the ongoing use of AI models after they’re built. Google’s TPU 8t targets model training, while the TPU 8i focuses on inference operations that happen when users submit prompts to AI systems.

Google promises significant performance gains with these new tensor processing units (TPUs). The company claims they deliver up to 3x faster AI model training compared to previous generations, with 80% better performance per dollar. Perhaps most notably, Google says it can cluster more than 1 million TPUs to work together, potentially offering massive compute power at lower energy costs.

The timing matters. As AI adoption explodes across enterprises, cloud providers are racing to offer cost-effective alternatives to Nvidia’s expensive GPU infrastructure. Custom chips like Google’s TPUs could help companies train and run AI models without paying premium prices for Nvidia hardware.

But Google isn’t declaring war on Nvidia just yet. Like Microsoft and Amazon, Google is positioning its custom chips as supplements to, not replacements for, Nvidia-based systems. In fact, Google promises its cloud will offer Nvidia’s latest Vera Rubin chip later this year.

This cautious approach makes sense given Nvidia’s market position. Chip analyst Patrick Moorhead noted on X that he predicted Google’s first TPU could hurt Nvidia back in 2016. Since then, Nvidia has grown into a nearly $5 trillion company – not exactly validating that prediction.

The reality is more nuanced. If Google succeeds as an AI cloud provider, it could actually drive more Nvidia business in the short term, even as some workloads shift to Google’s chips. The AI market is growing fast enough that multiple chip architectures can coexist.

Google is also deepening its partnership with Nvidia in other areas. The companies are collaborating on networking technology to make Nvidia-based systems run more efficiently in Google’s cloud. They’re specifically working on Falcon, Google’s software-based networking technology that the company open-sourced in 2023.

The broader trend here is clear: major cloud providers are all building custom chips to reduce their dependence on Nvidia over time. Amazon has its Trainium and Inferentia chips, Microsoft is developing its own silicon, and now Google is expanding its TPU lineup. Whether this eventually threatens Nvidia’s dominance remains an open question, but for now, it’s creating more options for companies looking to deploy AI at scale.