Google commits up to $40 billion investment in Anthropic

April 24, 2026Meta has signed a major agreement with Amazon Web Services to deploy tens of millions of AWS Graviton processors, making the social media giant one of the largest Graviton customers worldwide. The deal announced by AWS represents a significant expansion of the companies’ long-standing partnership as Meta builds infrastructure for its next generation of AI systems.

The deployment reflects a shift in AI infrastructure needs. While GPUs remain essential for training large models, the rise of AI agents is creating massive demand for CPU-intensive workloads like real-time reasoning, code generation, and orchestrating complex multi-step tasks. These workloads require different processing capabilities than traditional AI training, making specialized chips like Graviton increasingly valuable.

Why this deal matters for AI infrastructure

The partnership signals how major tech companies are rethinking their approach to AI infrastructure. As organizations adopt AI agents that can reason, plan, and complete complex tasks autonomously, they need processors optimized for different types of work than traditional machine learning training.

Meta’s massive Graviton deployment will power various workloads, including supporting AI systems that handle billions of interactions while coordinating complex agent workflows. This type of work is CPU-intensive rather than GPU-dependent, making Graviton’s architecture particularly well-suited for the task.

What makes AWS Graviton5 different

Amazon designed Graviton chips specifically to make cloud computing faster, cheaper, and more energy efficient. The latest Graviton5 processor features several key improvements:

- 192 cores with enhanced processing power

- Cache that is five times larger than the previous generation

- Up to 33% reduction in delays between core communications

- Up to 25% better performance than Graviton4

- Built on advanced 3-nanometer chip technology

These specifications make Graviton5 particularly effective for AI agents that need to continuously process information and execute multi-step tasks in real time.

Technical advantages for Meta’s AI systems

Graviton runs on AWS’s Nitro System, which uses dedicated hardware and software to deliver high performance and security. This setup enables Meta to access hardware directly while maintaining the flexibility to run its own virtual machines without performance compromises.

The Graviton5 instances also support Elastic Fabric Adapter (EFA), which enables low-latency, high-bandwidth communication between processors. This capability is crucial for Meta’s AI workloads, where large-scale tasks need to be distributed across many processors working together.

Industry implications and energy efficiency

The deal highlights the growing importance of energy-efficient computing as AI workloads scale up across the industry. Because AWS designs Graviton chips from the ground up and controls the full process from chip design through server architecture, it can optimize performance and efficiency in ways that standard processors cannot match.

This efficiency helps Meta pursue ambitious AI goals while meeting sustainability targets, as the infrastructure delivers stronger performance while maintaining leading energy efficiency.

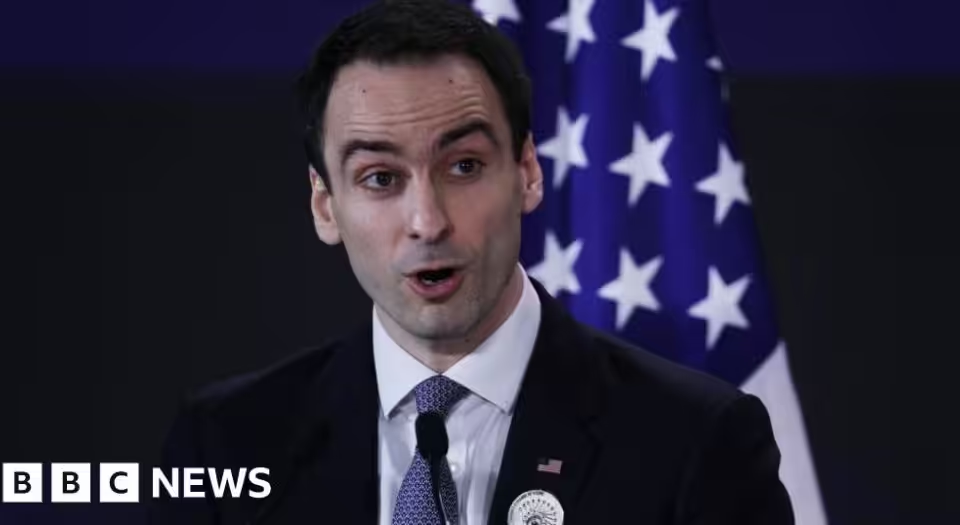

“This isn’t just about chips; it’s about giving customers the infrastructure foundation, as well as data and inference services, to build AI that understands, anticipates, and scales efficiently to billions of people worldwide,” said Nafea Bshara, vice president and distinguished engineer at Amazon.

“As we scale the infrastructure behind Meta’s AI ambitions, diversifying our compute sources is a strategic imperative. AWS has been a trusted cloud partner for years, and expanding to Graviton allows us to run the CPU-intensive workloads behind agentic AI with the performance and efficiency we need at our scale,” said Santosh Janardhan, head of infrastructure at Meta.